The two sides of the coin

AI tools and the content they generate are also the focus of researcher Dr. Dafna Burema. The sociologist is a postdoctoral researcher at the Cluster of Excellence Science of Intelligence (SCIoI) at the Technical University of Berlin. Her research questions pertain to human behavior and society in the context of technology: “I look at how (and why) people create artificial intelligence and, above all, have an eye on the ethical issues involved. Are ethical considerations taken into account and correctly applied when creating AI tools? Are the new technologies helpful or not?”

AI has already made its presence felt in everyday life

Almost everyone already uses AI unconsciously in their everyday lives – in streaming services, when shopping online, via spam filters or when navigating. It is now almost impossible not to use AI, says Dafna Burema. However, generative AI takes us one step further: it creates entirely new content based on training data – texts, images, music or videos.

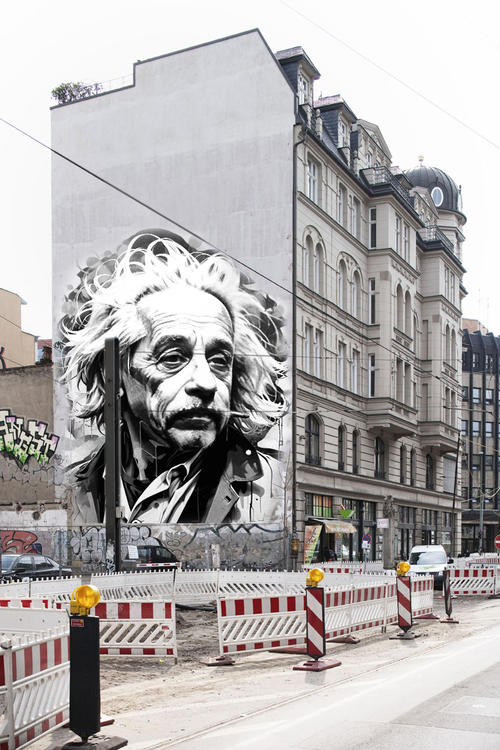

Such AI tools can be very helpful for scientific communication: complex and extensive scientific publications can be quickly translated, simplified and shortened using text programs, for example, so that they can also be understood by non-experts. Image programs use the right prompts to create images of difficult-to-observe phenomena or abstract concepts. They can illustrate data and patterns and appeal to a wide variety of target groups. AI can, therefore, help to ensure that research (and its results) are communicated in a more comprehensible and accessible way.

But there are also risks involved, such as manipulation of content or faking false facts. Data protection and intellectual property rights are further points of criticism that are often raised when it comes to the use of AI. The models are usually trained with texts, images, artworks and data without obtaining permission from the authors and owners. The issue of transparency is also very relevant: does AI content have to be labeled as such, or can users be left in the dark about the fact that what they are reading or viewing was not created by a human? And finally, AI content is not infallible. The term “AI bias” describes how human prejudices influence AI algorithms and lead to distorted results, which can then disadvantage certain social groups, for example.

Transparency is important

“The research field of AI and ethics is about taking a closer look at all these uncertainties and gray areas, and developing guidelines and rules for the use of AI,” as Dafna Burema describes one of her research tasks, which is rooted in the interface between sociology and technology. “And also the question of whether and why we want and need AI at all.”

Dafna Burema considers transparency to be one of the most important criteria when dealing with AI. “Many issues and risks result from people not knowing that they are interacting with artificial intelligence or consuming content that has been generated using AI.” Bots – i.e. automated software programs – as well as deep fakes and fake news are the negative examples that can be generated in enormous quantities at the touch of a button with the help of AI and are spreading rapidly, especially on social media. Conversely, AI is able to make content accessible to many people for the first time and support scientists in communicating their results and messages to a broad population, something Dafna Burema is convinced of. “AI can also have a democratizing effect,” emphasizes the researcher. “For example, when language barriers are overcome with the help of chat or translation programs.” Ultimately, AI – like so many technological achievements – is a tool that harbors risks, but at the same time can do a lot of good. “There are two sides to the coin, and we have to handle it carefully and critically,” summarizes the researcher. “We shouldn’t be too pessimistic, because the benefits of AI are enormous in numerous areas.”